Evolution of NLP: Fragmented AI to Foundation Models

Definitions

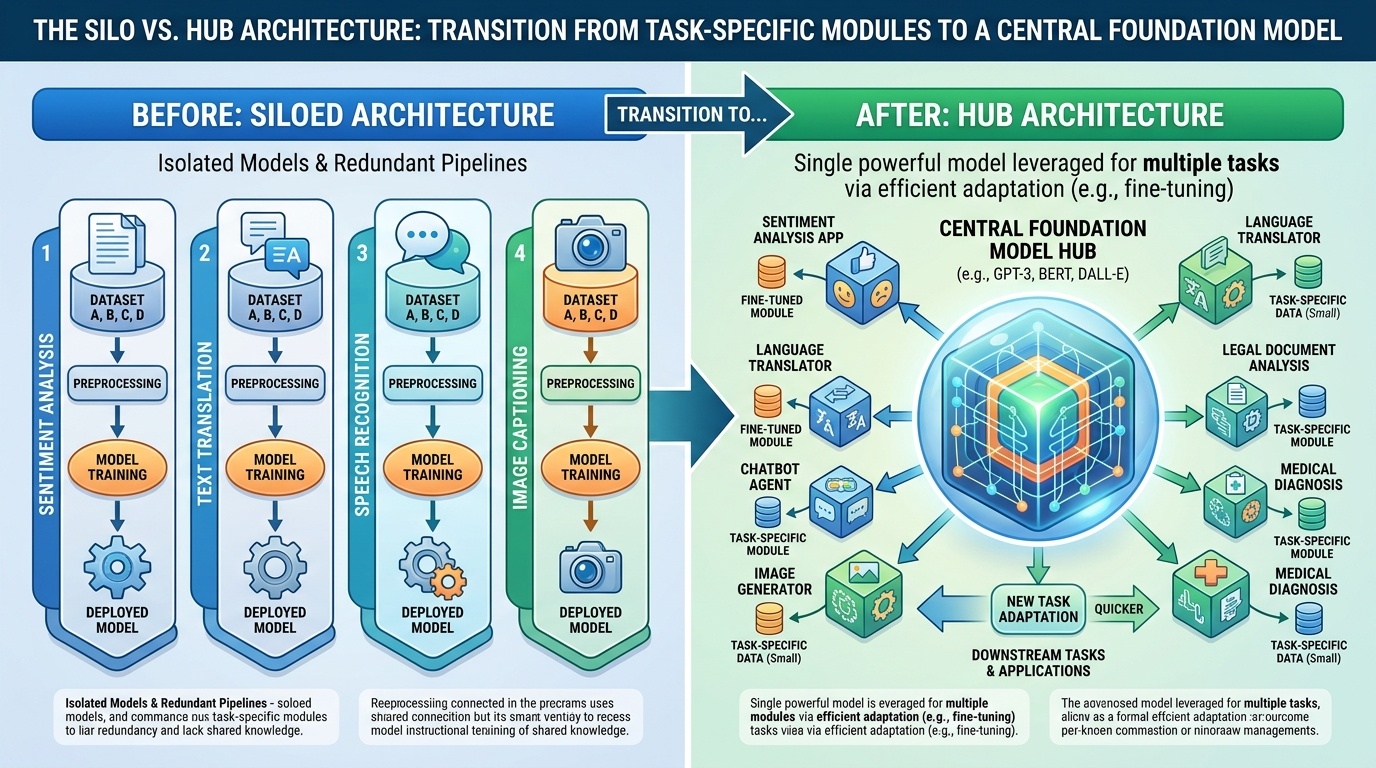

- Fragmented AI: An era defined by discrete, specialized neural architectures engineered for individual tasks like sequence labeling or classification.

- Foundation Model: A unified, monolithic transformer architecture that treats all linguistic problems as a generative text-to-text sequence $x \rightarrow y$.

Core Concepts

- Architectural Consolidation: Historically, NLP required bespoke pipelines (Bi-LSTMs for NER, CNNs for sentiment). LLMs collapse these silos into a single backbone where the same weights are utilized for every task.

- The Unified Interface: LLMs replace specialized "output heads" (e.g., 3-class Softmax) with a natural language interface. Inputs and outputs are always strings, allowing the model to interpret intent rather than format.

- Knowledge Transfer: Traditional models were "tabula rasa" for each task. LLMs prioritize Generalization First, where specific tasks are mere applications of a pre-existing, robust internal representation of language.

Historical Context

- Pre-2018: Task isolation required training distinct models with different loss functions $\mathcal{L}_{task}$.

- Modern Era: The "Text-to-Text" paradigm allows a single model (e.g., Llama-3) to pivot tasks via zero-shot or few-shot prompting.

Python Implementation Comparison